Overview

Over the last decade, tremendous interests have been attracted to this field and great success has been achieved for various video-centric tasks (e.g., action recognition, detection and segmentation) based on conventional RGB videos. In recent years, with the explosion of videos and various application demands (e.g., video editing, AR/VR, human-robot interaction, etc.), significantly more efforts are required to enable an intelligent system to perceive, understand and generate human action under different scenarios within multimodal inputs. Moreover, with the development of recent large language models (LLMs)/large multimodal models (LMMs), there are growing new trends and challenges to be discussed and addressed. The goal of this workshop is to foster interdisciplinary communication of researchers so that more attention of the broader community can be drawn to this field. Through this workshop, current progress and future directions will be discussed, and new ideas and discoveries in related fields are expected to emerge. The topics include but are not limited to:

- Perception: human pose/mesh recovery from multimodal signals;

- Understanding: scene-human-object interaction, multimodal (RGB/depth/skeleton) action recognition, detection, segmentation, and assessment;

- Generation: text/music-driven human action generation;

- Foundations and beyond: large language models/large multimodal models for action representation learning, dataset and evaluation, learning from human demonstration.

Schedule

Time |

Speaker |

Content |

| 13:30 pm - 13:40 pm PT | Organizing Committee | Opening Remark |

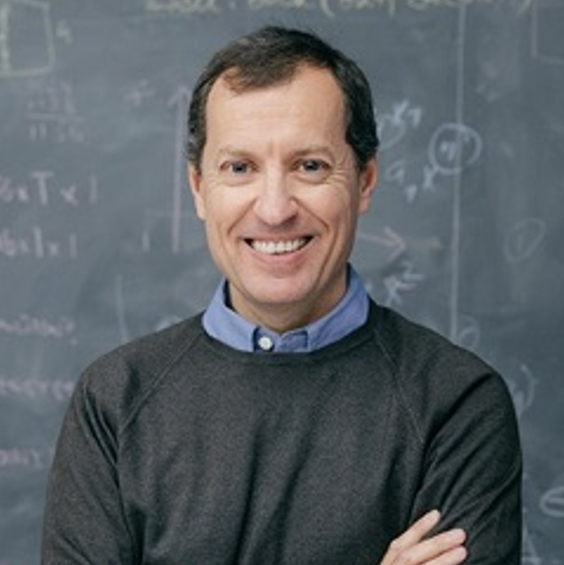

| 13:40 pm - 14:10 pm PT | Georgios Pavlakos | Invited Talk1 |

| 14:10 pm - 14:40 pm PT | Lorenzo Torresani | Invited Talk2 |

| 14:40 pm - 15:10 pm PT | Zicheng Liu | Invited Talk3 |

| 15:10 pm - 15:40 pm PT | Kristen Grauman | Invited Talk4 |

| 15:40 pm - 16:10 pm PT | Ivan Laptev | Invited Talk5 |

| 16:10 pm - 16:40 pm PT | Siyu Tang | Invited Talk6 |

| 16:40 pm - 17:10 pm PT | Jiajun Wu, Jiaman Li | Invited Talk7 |

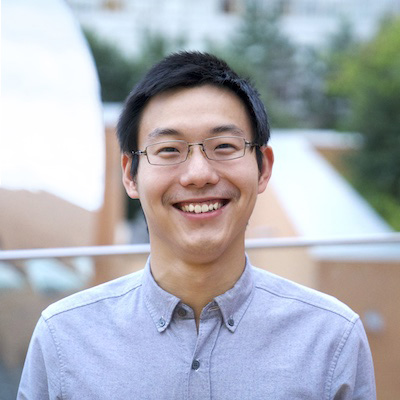

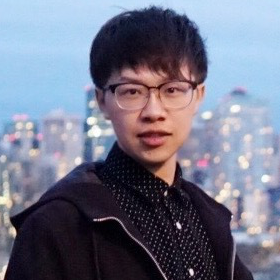

| 17:10 pm - 17:20 pm PT | Jieming Cui | Spotlight Presentation1 |

| 17:20 pm - 17:30 pm PT | Lingmin Ran | Spotlight Presentation2 |

| 17:30 pm - 17:40 pm PT | Ao Li | Spotlight Presentation3 |

Speakers

Spotlight Presenters

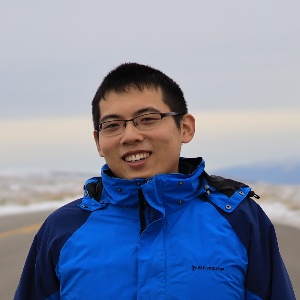

Organizers

* Equal Contribution